Why Most AI Pilots Never Make It to Production

The gap between a working demo and a deployed system is wider than most teams expect — and it's where the real cost of AI lives.

The gap between a working demo and a deployed system is wider than most teams expect — and it's where the real cost of AI lives.

Walk into any company today and you'll find AI everywhere. ChatGPT is open in a browser tab. A custom agent runs in a Slack channel. The marketing team has a prompt library. The CTO ran a workshop on building with LLMs last quarter. By every measurable signal, AI adoption has arrived.

And yet, when you ask whether any of it is actually running the business — handling real customer requests, processing real transactions, deciding real outcomes — the answer is almost always no.

That gap between we built something and we deployed something is the most expensive line item in enterprise AI right now. It's also the one almost no one is talking about honestly.

The AI Strategy Summit 2026 – June 4 | Free Virtual Event

On 6/4, join Scott Galloway and Section to learn everything you need to scale from individual productivity gains to enterprise-wise AI orchestration. Learn how to scale siloed AI use into organization-wide impact and get the playbook to turn your org into a connected, augmented, AI powerhouse.

The Numbers Are Worse Than You Think

A July 2025 study from MIT's NANDA initiative, The GenAI Divide: State of AI in Business 2025, analyzed over 300 enterprise AI deployments. The headline finding: 95% of organizations deploying generative AI saw zero measurable return — not low return, zero.

The research from IDC and Lenovo paints the same picture from a different angle. For every 33 AI proof-of-concepts a company launched, only four graduated to production. That's an 88% failure rate before you ever get to the question of whether the production deployment delivers ROI.

And the trend is getting worse, not better. According to S&P Global's 2025 enterprise survey, 42% of companies abandoned most of their AI initiatives this year, a dramatic spike from just 17% in 2024.

The pattern has a name now. Analysts call it "AI pilot purgatory" — the gap between a promising demo and an actual production deployment, where projects are neither cancelled nor shipped, just perpetually extended.

Why Demos Pass and Production Fails

The temptation is to blame the models. But the MIT report is direct on this point: the core barrier to scaling is not infrastructure, regulation, or talent — it is learning. Generic AI tools work in a demo because demos run on curated datasets, controlled inputs, and a forgiving audience. Production runs on years of inconsistent data, real customers behaving unpredictably, and zero tolerance for the kind of confident wrong answers LLMs produce when they're left unsupervised.

A few specific failure modes show up again and again:

The integration tax. A model that works in isolation has to plug into systems that don't speak its language. CRM records, billing platforms, support tools, internal databases — each with its own auth, its own schema, its own quirks. Stitching them together is unglamorous work that rarely gets funded properly.

No observability. When an AI agent makes a decision, can you see what it did and why? In most pilots, the answer is no. That's fine for a demo. It's a non-starter for anything regulated, customer-facing, or financially material.

No governance. Who is allowed to edit the prompts? Who gets notified when an agent fails? Who controls spend? Most pilots ignore these questions entirely. Then someone in compliance asks them, and the project freezes.

Vendor lock-in by default. The model you started with may not be the model you want in 12 months. Pricing changes. Capabilities change. New providers emerge. If your system is hard-wired to one LLM, you've already lost the leverage that makes long-term AI economics work.

What the 5% Are Doing Differently

The companies that actually make it to production share a few patterns.

They start with a specific business pain, not a technology demo. Lumen Technologies didn't deploy AI because their CTO wanted to. They deployed it because it typically takes a seller four hours to do research for customer outreach, and with generative AI, they can now do that in 15 minutes — four hours back each week is worth $50 million in revenue over a 12-month period. The pain was named in dollars before any model was selected.

They buy and partner rather than build from scratch. The MIT data is striking on this point: strategic partnerships with external vendors reach deployment 67% of the time compared to 33% for internal builds. The instinct to build everything in-house — especially in regulated industries — is the single most reliable predictor of a stalled project.

They focus on back-office wins, not front-line theater. More than half of generative AI budgets are devoted to sales and marketing tools, yet the biggest ROI sits in back-office automation — eliminating business process outsourcing, cutting external agency costs, and streamlining operations. Document review, procurement workflows, risk analysis, ticket summarization. The work no one wants to do but every business depends on.

They invest in the infrastructure first. A WorkOS analysis of dozens of enterprise deployments found that winning programs invert typical spending ratios, earmarking 50-70% of the timeline and budget for data readiness — extraction, normalization, governance metadata, quality dashboards, and retention controls. The unglamorous foundation is what separates pilots that ship from pilots that drift.

The Shift That's Coming

Most coverage of the AI pilot failure rate frames it as a competence problem — companies aren't sophisticated enough, teams aren't skilled enough, leadership isn't bought in enough. That framing misses the bigger story.

The reason 95% of AI pilots fail to produce return isn't that the technology doesn't work. It's that the supporting infrastructure — the orchestration layer, the observability layer, the governance layer — is still being invented. The companies succeeding right now are the ones who recognize that building the AI is maybe 20% of the work. The other 80% is everything that has to exist around the AI to make it survive contact with a real business.

That gap is the most interesting opportunity in enterprise technology right now. The next decade of AI won't be won by whoever has the smartest model. It will be won by whoever makes it the easiest to deploy one reliably.

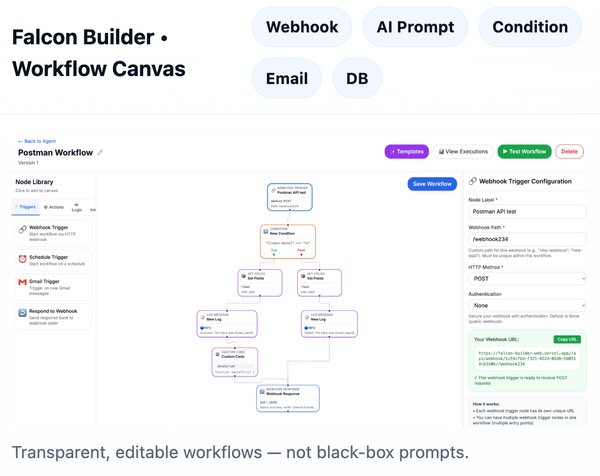

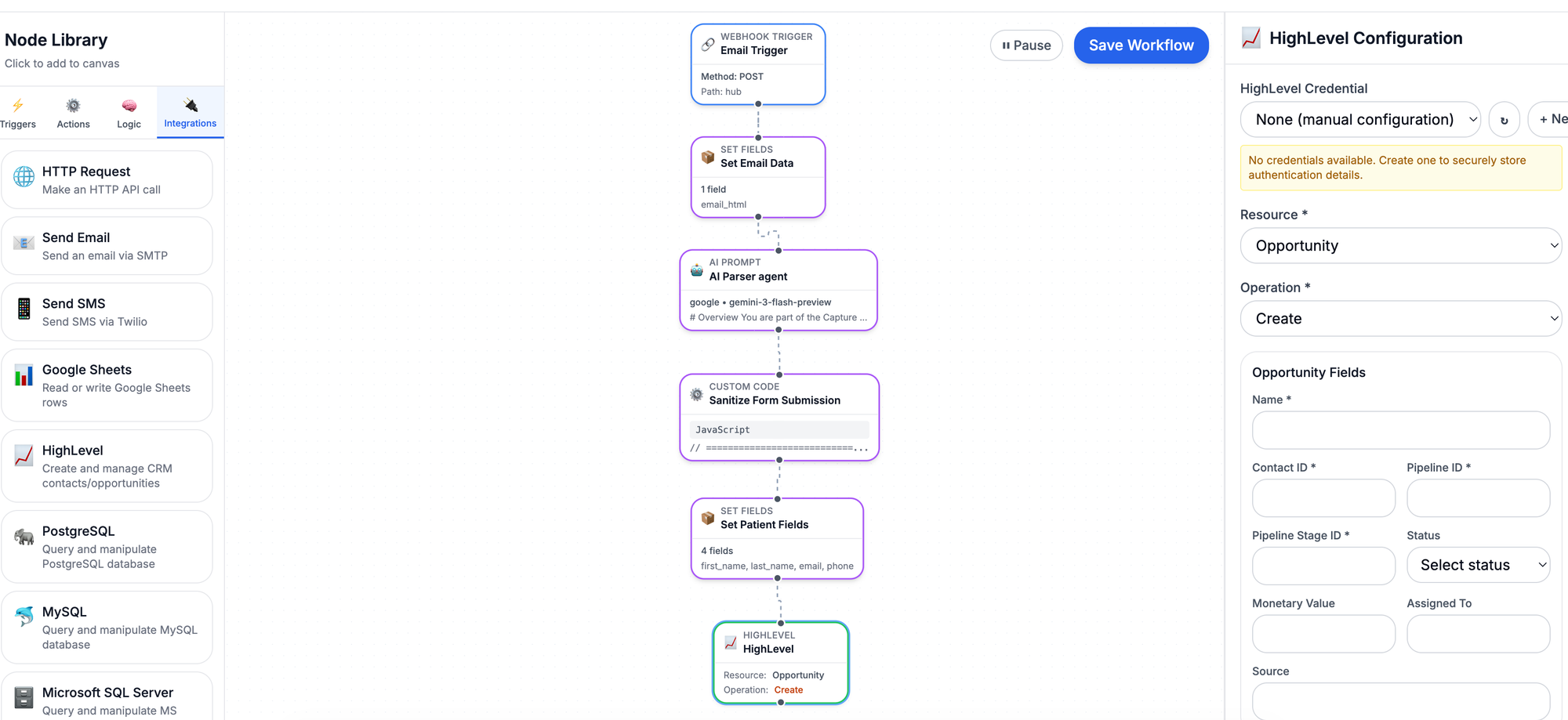

Falcon Builder by NeoSky AI is officially live.

It’s a no-code platform for building, deploying, and running AI agents and workflows — with real execution, real integrations, and real usage controls.

You can:

• Vibe-code your AI agent with our AI Agent Generator

• Trigger workflows via webhooks, schedules, or email

• Use OpenAI, Anthropic, Gemini, or Ollama

• Connect directly to databases and APIs

• Monitor executions with full logs and limits

• Scale from free to enterprise without rebuilding anything

Start building here: 👉 https://www.falconbuilder.dev